User Guide: FPGA-Accelerated Deep-Learning Inference with Binarized Neural Networks¶

Missing Link Electronics (MLE) has ported to Amazon EC2 F1 instances the Open-Source BNN-PYNQ Demo from Xilinx to demonstrate the performance benefits of Deep Convolutional Neural Network Inference when run in reduced precision in an FPGA plus the advantages provided by efficient cloud based heterogeneous computing with FPGAs using Amazon Web Services (AWS).

This User Guide will describe how to set up and start an Amazon EC2 F1 instance, and how to run the demo:

- The demo can be found in the AWS Marketplace by (following this link).

- To get started with the demo you need to create an instance using MLE’s AMI from the AWS Marketplace. To be able to do this you will need an AWS account and set your Amazon EC2 limit for f1.2xlarge to at least one (details for doing so can be found here).

- Afterwards you can explore the demo functionality using an interactive, Python based webinterface, namly Jupyter in your webbrowser.

For more information about MLE’s offerings for accelerating Neural Networks with FPGAs, please visit our Machine-Learning website

Setting Up the Instance¶

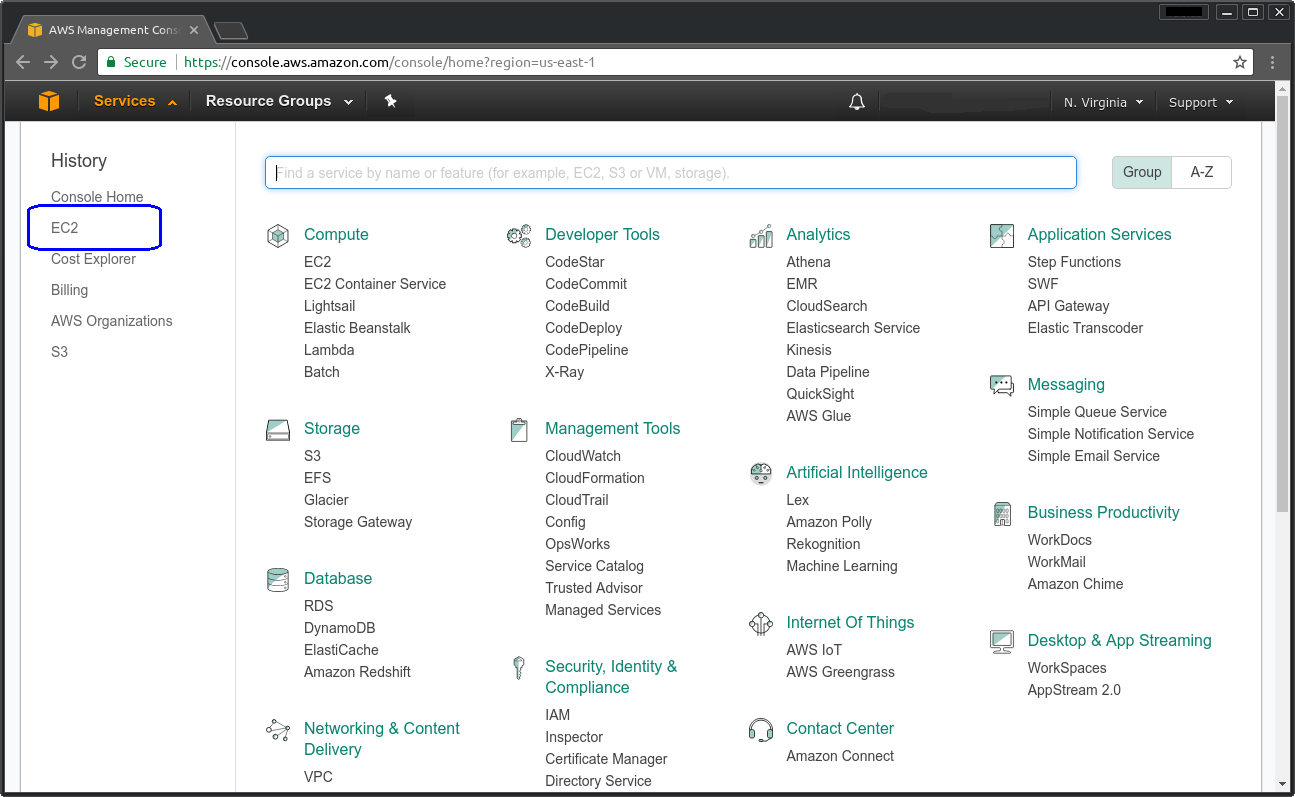

To get started you will need to log into your AWS account and browse to the AWS web console shown in Figure 1.

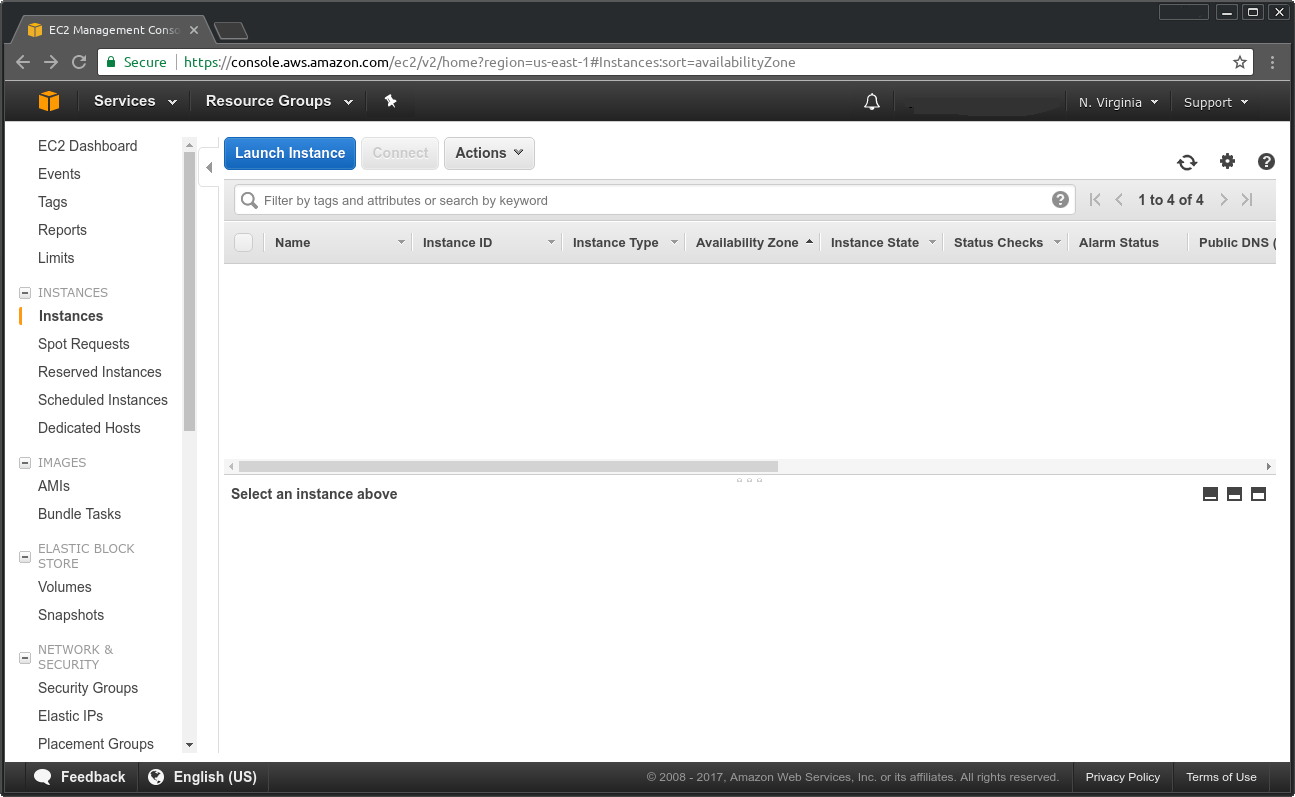

From here browse to the “Service - EC2” and to the Instances section as shown in Figure 2. Create a new instance by clicking on “Launch Instance”.

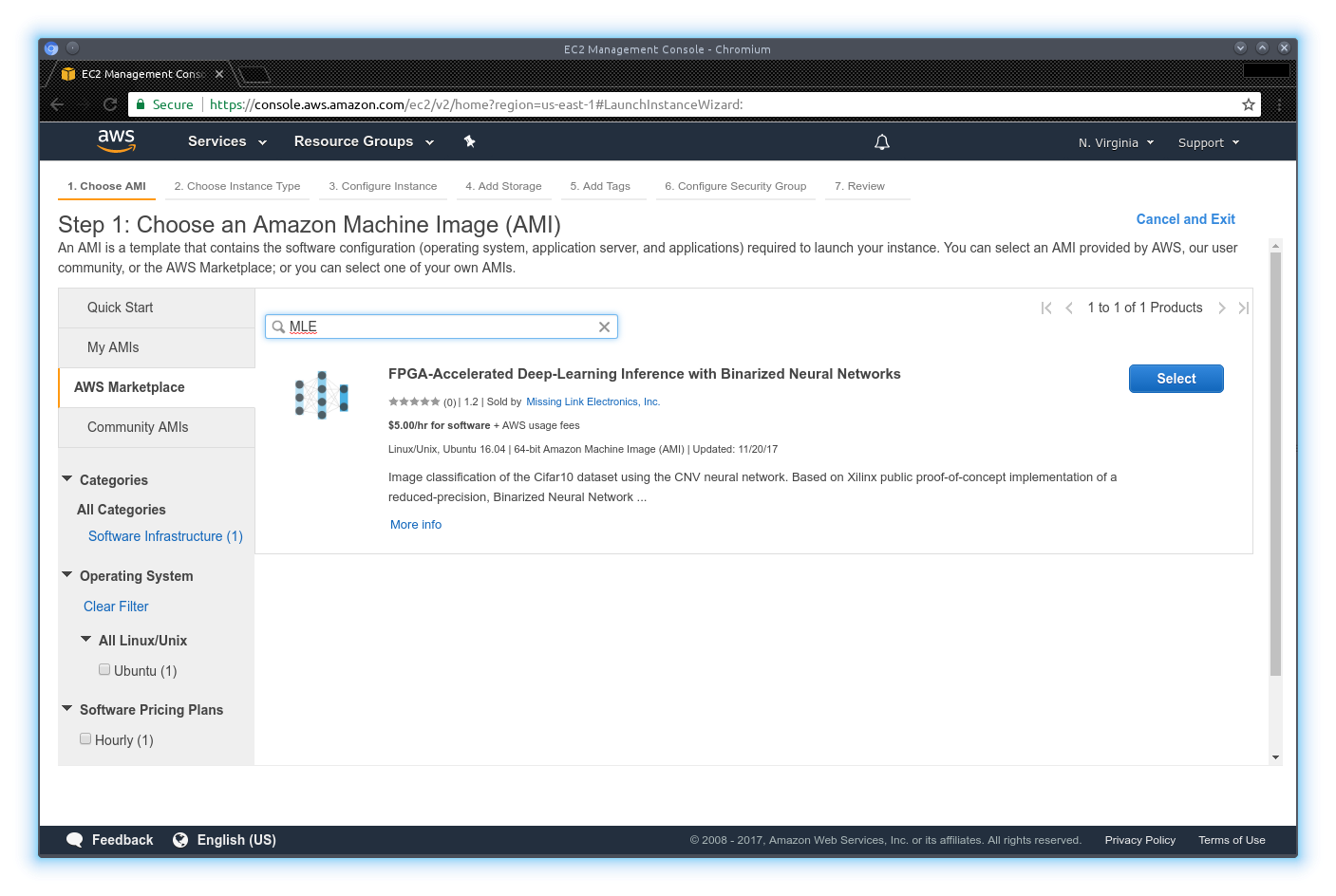

Go to the AWS Marketplace and search for “MLE” as shown in Figure 3. You should find MLE’s AMI which you can select to generate your instance.

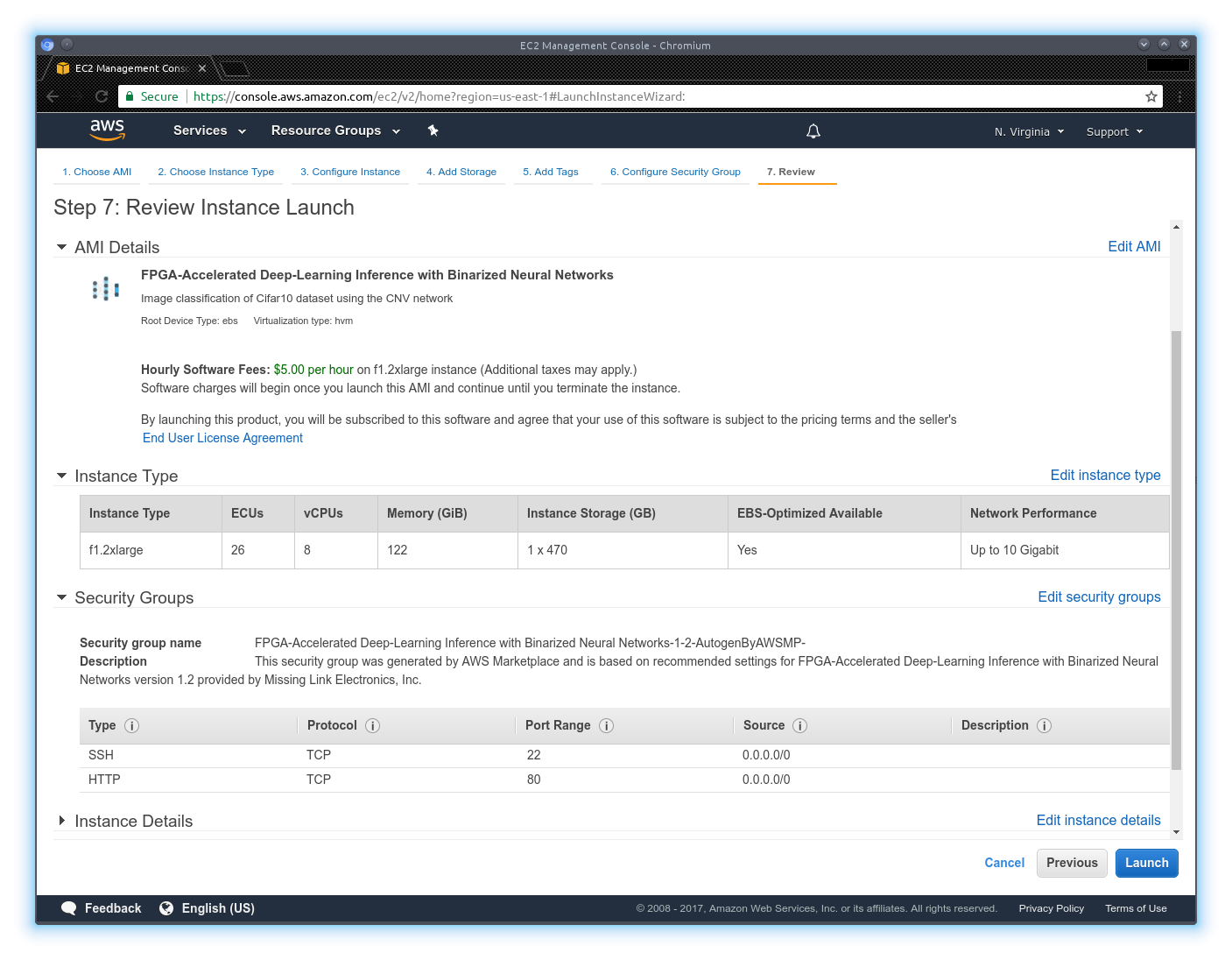

Now you may configure your instance for your personal needs. However, you do not need to change anything here as the defaults match the needs for this AMI. In Figure 4 you can see the resulting overview dialog which must show that you are using an f1.2xlarge instance with two security groups (Port 80 for HTTP webinterface (mandatory) and port 22 for SSH which is optional).

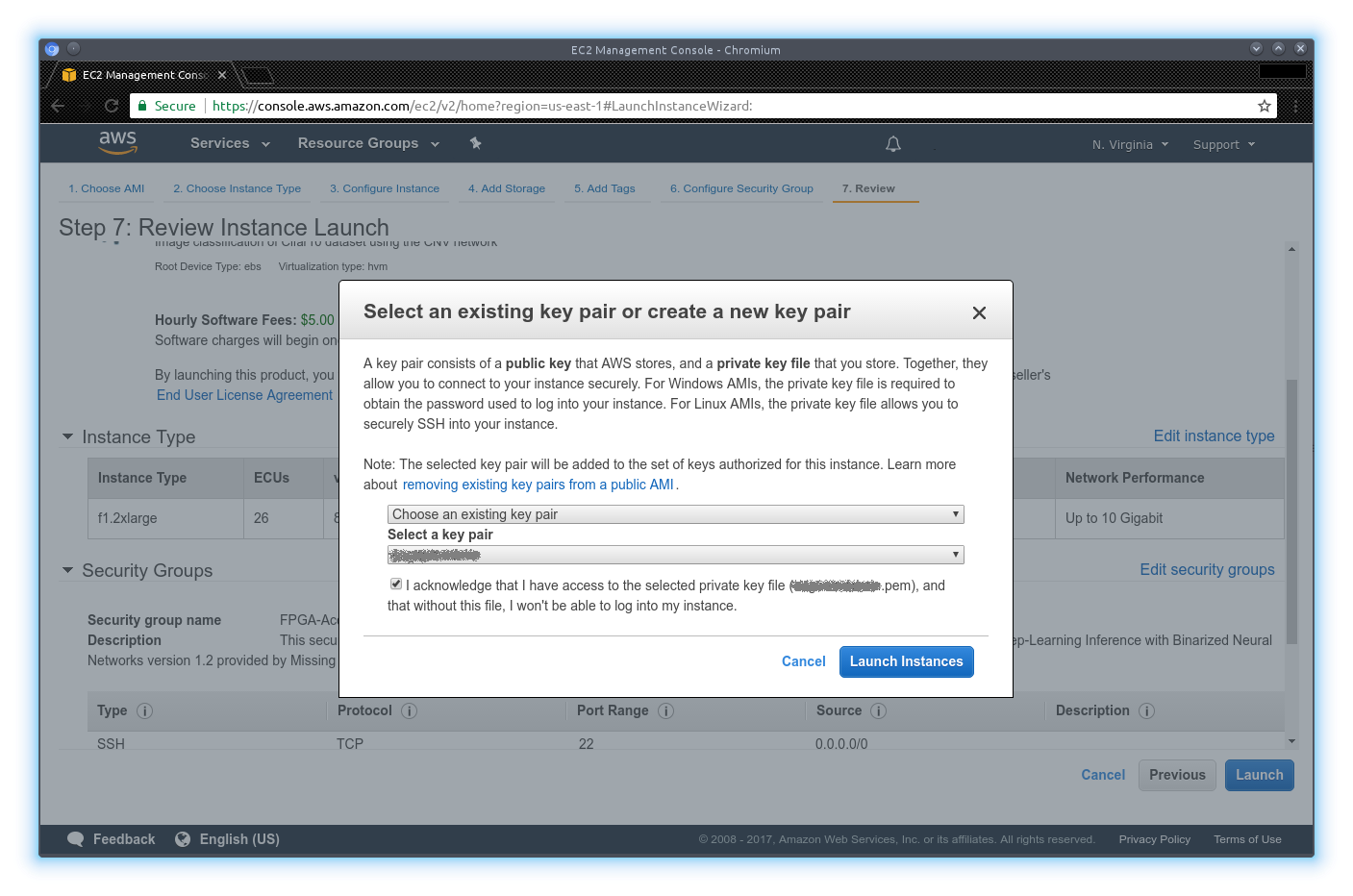

Before launching your instance you will need to select a key file or generate one as for all Amazon EC2 instances. The prompt to do so is shown in Figure 5. To login via SSH please use the username ubuntu, which is accessible with the keyfile specified before.

Figure 1 AWS Web Console

Figure 2 AWS Instances view

Figure 3 AWS Marketplace with AMI to be selected

Figure 4 Configured Amazon EC2 F1 instance with correct default options

Figure 5 Configured Amazon EC2 F1 instance asking for keyfile before launching it

Running the Demo¶

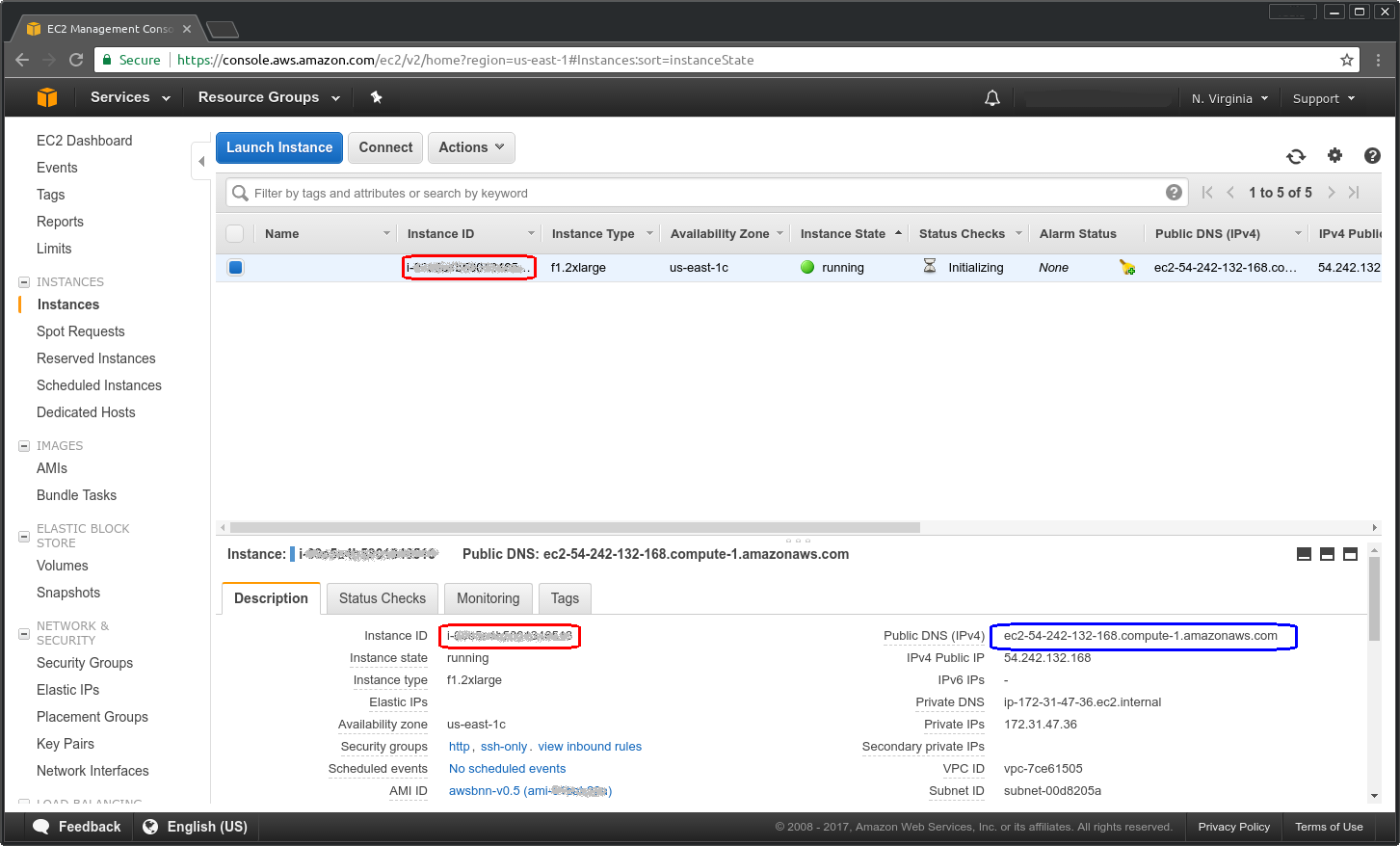

When the instance is launched click on the “View instances” button which will bring you back to the Amazon EC2 instances overview page. Here you will see the newly generated instance in state “running”. On this page select the instance and copy the Public DNS of your instance (marked in Figure 6).

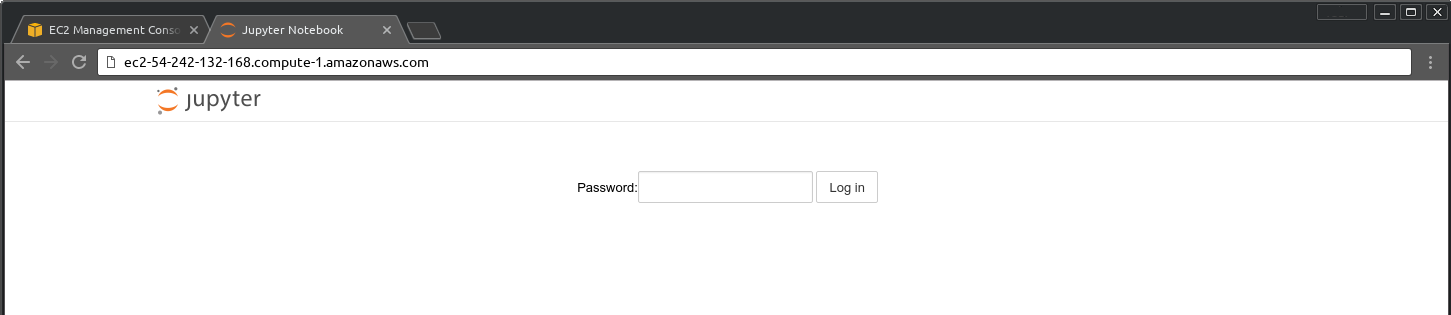

Browse to this address with your webbrowser in a new tab. It will take around 2 minutes for the instance to be set up and booted.

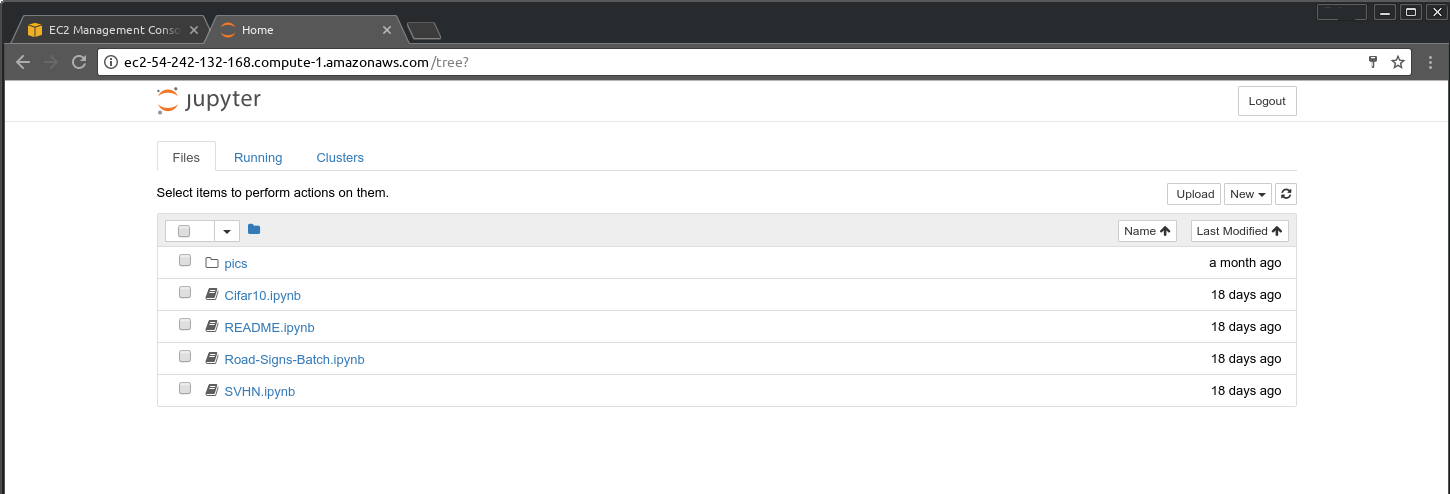

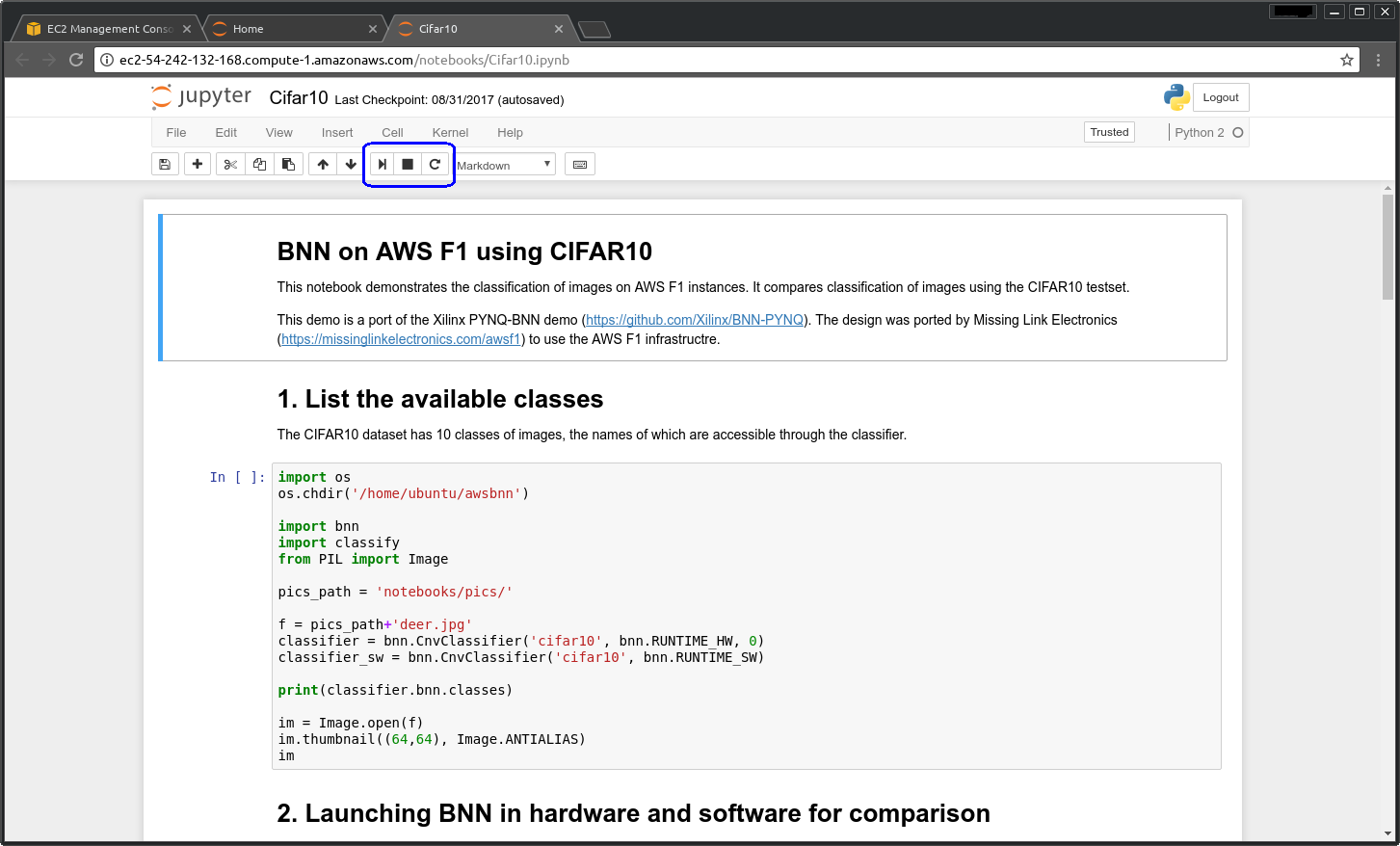

When the machine is booted you will see the Jupyter login screen as in Figure 7. The password is the instance id as highlighted in red in Figure 6. When logged in you will see a screen as shown in Figure 8. Here you can see four so called Notebooks. Each of those is an example you can execute. Click on the example to open it in a new tab. In the example you can execute each code block by pressing the Play button or “Shift+Return”. Figure 9 as an example shows the Cifar10.ipynb notebook.

Figure 6 Launched Amazon EC2 F1 instance with assigned DNS

Figure 7 Jupyter login screen

Figure 8 Jupyter notebooks screen

Figure 9 Jupyter notebook for CIFAR10 example

Conclusion & Contact Info¶

Running this demo, hopefully, was useful in demonstrating to you the performance benefits of running Machine-Learning Inference on FPGAs when combined with reduced precision techniques.

Again, for more information about MLE’s offerings for accelerating Neural Networks with FPGAs, please visit our Machine-Learning website

Or, if you are interested in exploring these techniques for your application, please contact us directly.