Growing Demand for Deterministic Networking

We all observe a growing need to connect computers with each other with shorter delays (i.e. lower latencies) and higher bandwidth, in particular for High-Performance Computing (HPC) in the data center and in embedded systems such as advanced industrial robotics or autonomous vehicles, requiring the so-called deterministic networking. Processing of TCP/IP based network protocols at speeds of 10 Gbps and beyond demand kernel bypass solutions (such as Intel’s DPDK or Solarflare’s/Xilinx’ Onload or Mellanox/NVida VMA) and/or so-called TOEs (TCP Offload Engines).

Domain-Specific Architectures (DSA) use so-called heterogeneous computing elements, also known as Cores with the objective to put the compute burden where it belongs. This is a well established approach going back to the early days when an x86 CPU was partnered with an x87 for better floating-point processing. Today, it is common to deploy various flavors of Cores, for example:

- DSP Cores for digital signal processing in telecommunications

- Shader Cores optimized for image processing, as they can be found in modern Graphics Processing Units (GPU)

- Tensor Processing Units (TPU) Cores which are optimized for Artificial Intelligence and Deep Learning

This is because such (special purpose) fixed-function or programmable function accelerator Cores are optimized for a particular domain and, when properly used, not only take processing load off the (general purpose) CPU but also deliver better overall performance (which is data processed per time) and better efficiency (which is performance per Watt).

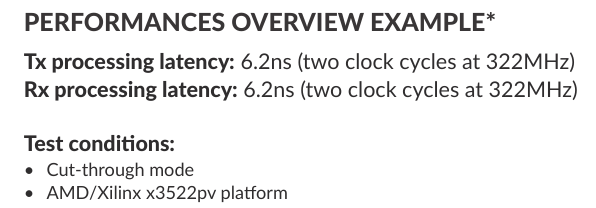

Over the following pages we will make a case for processing TCP/IP over TSN over 10/25/50/100 Gigabit Ethernet on dedicated Cores which has significant advantages in particular for real-time Ethernet and Deterministic Networking. These so-called TCP-TSN-Cores can be integrated either in FPGAs or in SoCs (ASIC and ASSP). As we will show, TCP-TSN-Cores are more than just a TOE – the commonly used approach for network protocol acceleration. By running the entire network protocol stack from OSI Layer 2 to at least Layer 4 in a dedicated integrated circuit – a so-called Full Accelerator – we can remove (general purpose) CPUs entirely from the datapath.

Hence, TCP-TSN-Cores can deliver very low bounded and deterministic latency with predictable scalability needed for 10/25/50/100 Gigabit Deterministic Networking.