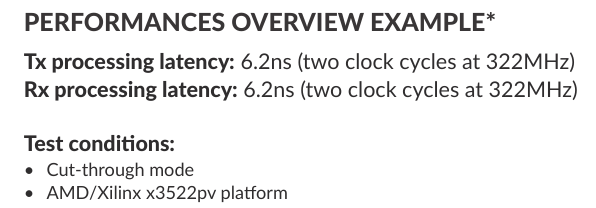

Testdrive The Future of Networking: Evaluate MLE’s FPGA-Powered TCP/IP Stack in the Cloud! Our Mission: If It Is Packets, We Make It Go Faster! And with packets we mean: Networking using TCP/UDP/IP over 10G/25G/50G/100G Ethernet PCI Express (PCIe), CXL, OpenCAPI Data storage using SATA, SAS, USB, NVMe Video image processing using HDMI, DisplayPort, SDI, FPD-III. In today’s fast-paced digital landscape, robust and efficient network communication is critical. For developers seeking to optimize network performance, MLE offers a groundbreaking solution: an FPGA-accelerated TCP/IP stack. Now, you can experience the power of this technology firsthand by evaluating it in our cloud-based environment, before committing to a hardware purchase. The Need for Speed: Overcoming Software Limitations Traditional software-based TCP/IP stacks often struggle to keep up with the demands of high-bandwidth, low-latency applications. Processing overhead can lead to bottlenecks and reduced efficiency. MLE’s FPGA-accelerated TCP/IP stack addresses these challenges by offloading network processing to dedicated hardware. The MLE Advantage: Hardware Acceleration for Optimal Performance Our Network Protocol Accelerator (NPAP) leverages the parallel processing capabilities of Field-Programmable Gate Arrays (FPGAs) to implement a full TCP/IP/UDP stack directly in hardware. This approach drastically reduces latency and maximizes throughput, offering a significant performance advantage over software-based solutions. Try Before You Buy: Cloud-Based Evaluation MLE understands the importance of thorough

Read More